Seeds are simultaneously one of the most used and one of the most misunderstood features in Stable Diffusion.

Let's talk about what they are, and then look at how we can use them to improve our results.

What is a seed?

Seeds aren't unique to Stable Diffusion.

You might heard of them if you've played Minecraft.

When a player starts a new game, the game assigns them a pseudo-random seed which is used to generate the entire world. I say pseudo-random because computers can't actually generate random numbers, which is kind of crazy.

Anyways, in Minecraft the same seed value will generate the same world every time. A player who finds a cool world can share the seed with their friend, who can use that seed to generate the exact same world.

In Stable Diffusion, instead of a game world, a seed produces a unique noise image.

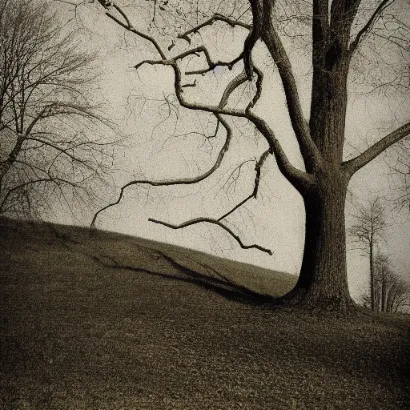

That's exactly what it sounds like, an image of noise:

prompt: cool image

seed: 1

This isn't supposed to look like anything but random noise.

The secret sauce of Stable Diffusion is that it "de-noises" this image to look like things we know about.

And since the same de-noising method is used every time, the same seed with the same prompt & settings will always produce the same image.

The steps parameter in Stable Diffusion interfaces is how many times this algorithm is applied.

Every step, SD will produce an image that better resembles the visual information from all the images the model was trained on (related to your prompt).

What does that mean for us as Stable Diffusion users?

- You don't have to specify the seed. If you enter the seed as -1 (AUTOMATIC1111's Stable Diffusion WebUI) it will be random.

- Controlling the seed can help you can generate similar images. This is the best way to experiment with the other parameters or prompt variations.

- img2img essentially replaces the starting noise image with the image you give Stable Diffusion. That's why it's so effective.

How to use seeds

You can also use the same techniques regardless of what Stable Diffusion interface or model you are using.

Controlling the seed

By keeping the seed the same across generations, you can tweak the prompt to change small parts of the image:

Model: Stable Diffusion v.14Seed: 208513106212Sampling steps: 28CFG scale: 8